Enable latest DSP engine with 192 kHz sampling, 64-bit processing, and 128 voices with latency under 6 ms via wired link. This baseline keeps dynamics clean while enabling dense layers for tonal color that feel realistic.

Think from several angles: spatial cues, spectral shaping, and adaptive envelopes. facial tracking can drive gentle modulation, adding subtle movement while keeping processing efficient for creating engaging textures.

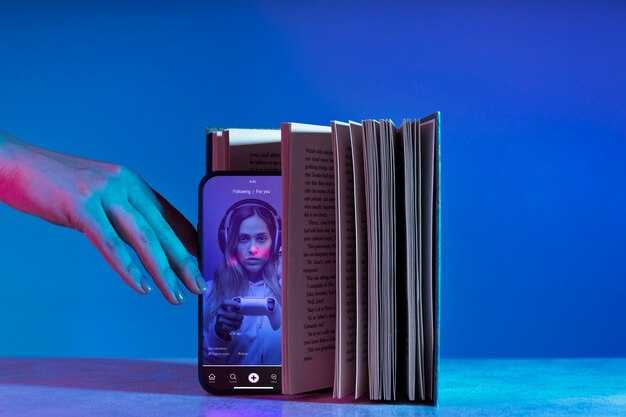

As a partner with domoai, маркетологи gain million possibilities to share polished tones. Presets highlight artistry across products, delivering easiest onboarding for creative teams while preserving high‑fidelity processing.

Aspects include dynamic range, spectral balance, and latency budgets. Never let CPU load exceed 60% during peak sessions; always verify with a simple UI. That approach yields much more nuance in real-time modulation. Highlight micro‑variations via subtle envelopes; share results across devices for consistent results.

Practical setup tips: route only essential modules to master bus, keep oversampling modest, and enable realistic room cues via pre‑computed impulse responses. With this approach, users can play with sonic textures rapidly, reducing iteration cycles and boosting productivity for product pipelines. thats a quick win for teams seeking measurable outcomes.

Practical Workflows for Visual World Synthesizer

Begin with a repeatable, modular build: split input flow into files, images, scene maps, and control signals; connect them via a lightweight object queue. Maintain a photodump of source materials named источник, with clear labels for mood, texture, and form. This setup saves time and offers faster iterations.

Establish a compact taxonomy: object-driven presets, parameter slots, and media feeds. For each asset, attach tags: look, mood, scale, and angle. Use to mark style presets, introduction cards, and stability in looking across frames, preventing drift during runs.

Adopt an image-generation loop: feed images from assets into generators, then queue outputs to a look-alike cache. Record an explainer for each asset detailing its purpose and expected image generations. If results diverge from goals, adjust parameters and re-run; otherwise, keep current settings.

Integrate an upscaler on targeted inputs before synthesis, then compare results with baseline to decide whether benefits outweigh artifacts, and do this without adding latency. Keep a simple side-by-side gallery to review changes.

Blend traditional mapping with dynamic controls; keep ultimate look target in mind. Start with broad color mapping, then layer micro-gestures via parameter animations; treat control maps like a game puzzle to boost engagement, and this enhances texture and feels. Experiment with techniques for micro-gesture shaping to tighten rhythm.

Organize assets for swift access: separate folders for files, images, and metadata; maintain a stable log of generations and reaction notes. Handles multiple asset types, supports quick swap-ins, and preserves historical context for their projects.

Performance tuning: batch renders, cap concurrency, cache results, and precompute photodump snapshots. Use lightweight probes to validate progress before rendering full sequences.

Another practical tip: schedule concise review times, iterate on small changes, and keep notes. This habit shortens time between experiments and builds confidence in overall workflow.

Mapping Visual Cues to Synthesis Parameters

Рекомендація: Link a single frame cue to a parameter, then expand gradually through swap-based refinements.

Build a library of backgrounds and textures, each tied to a param envelope. An editor can tag assets with target values, while managerdedicated workflows keep consistency across scenes.

When aiming for realistic results, map motion footage frames and color cues to low-pass cutoff, resonant peaks, and dynamics. Keep one frame reference as anchor. Consider techniques like soft clipping for warmth, or time-aligned LFOs to modulate pitch and formant-like shifts. Evaluate cost vs quality within production budget models.

Process blueprint: start with a ready-made frame of base parameters, then adjust via editor notes. A brand-safe kit helps editors keep arcs coherent. Within factory-like presets, creating consistent tones across campaigns becomes straightforward, transforming raw footage into sonic texture while reducing cost and preserving artistry. A managerdedicated workflow ensures alignment with product goals.

Techniques list: parameter groups for backgrounds, frame, and texture layers. Good practice includes documenting swaps, creating checklists, and maintaining a log in editor project. This helps audience perception; directly map to final mix decisions, whether you plan to reuse assets across scenes.

Implementation checklist: define hero cues, test multiple passes, measure realism by comparing against reference footage, adjust cost-benefit. From initial pass to final mix, track within your editor notes, then export a compact preset pack for future scenes.

Layering Textures and Modulation for Immersive Pads

Start by layering a slow-attack sub pad with a long release, then stack a cloud-like texture tuned with a soft high-pass to keep bass clarity. This approach preserves space within mix while maintaining body of sound.

Add another layer using a gentle foil of detuned voices or wavetable textures to add color. Use a light filter sweep and small reverb to avoid crowding; aim for a fantastic, cloud-like presence that remains detailed when played alongside other voices. This setup supports high-quality performances by artists that stay within brand guidelines, and helps transfer ideas quickly to managerdedicated sessions and e-learning workflows.

Modulation: route an LFO to filter cutoff with a subtle depth; pair with an envelope to sculpt attack of each layer, so volume changes remain natural and very real in space. Keep rates low, use tempo-synced values for cohesion across tracks; this delivers a less abrupt transition between textures.

whats turned into a well-balanced pad demands quick listening checks. Within practice, share plans with brand teams, request feedback from other artists, and keep support channels active to stay aligned. Utilize upscaler-grade samples and integrate into e-learning workflows to ensure every layer contributes a high-quality part, while staying very real in performance context.

| Layer | Technique | Settings | Нотатки |

| Base Sub | Slow-attack, long-release | Cutoff ~120 Hz; Depth 0.15; Resonance 0.25 | Preserves low-end clarity |

| Texture Layer | Detune/Detuned voices | Detune ±6 semitones; Filter 400–800 Hz (HP) | Adds color without mud |

| Space Reverb | Large hall | Time 2.2 s; Mix 25% | Expands space, smooths transitions |

| Delay Pad | Ping-pong | Time 320 ms; Feedback 12% | Extends atmosphere |

Creating Dynamic Transitions and Time-based Effects

Begin by setting tempo in BPM and anchoring transitions to beat grid to discover smooth motion across videos. Avoid abrupt shifts; durations vary 0.25–1.2 seconds depending on speed and narrative rhythm. At 120 BPM, 0.3 second crossfades align with sixteenth notes; 0.9 second wipes create cinematic momentum.

Guideline: if you double tempo, cut durations by half; if tempo slows, extend accordingly. This keeps transitions responsive without breaking musical flow, helping both designer teams and marketing managers to maintain consistency across assets.

- Tempo-synced crossfades: set crossfade durations 0.25–0.6 seconds for quick cuts; extend up to 1.2 seconds for mood shifts; measure in frames based on FPS; videos will feel faster yet controlled.

- Masks and morphs: use edge masks to blend between visuals, enabling smooth replacement when assets change; facial cues can drive gradual reveal during explainer sequences.

- Layered time-based effects: drive delay, reverb, and filter sweeps off tempo; enable per-layer automation to respond to music or spoken words; capture dynamic movement while keeping a coherent style.

- Motion curves and velocity: apply ease-in and ease-out curves to transitions; faster adjustments on high-energy sections create a hero moment while slower shifts support narration.

- Workflow for production: prepare a short tests script, run quick iterations, compare speed variance across devices, and document which settings boost sales impact in client demos.

- Facial-driven pacing: in face-centric videos, sync visual transitions with micro-expressions for authentic feel; behind ambitious cuts, adjust timing to maintain readability for viewers.

Implementation tips: use explainer-grade software to map beat positions to animation keys, keep a dedicated style guide, and maintain a single project manager who oversees tempo rules across scenes. When you discover efficient patterns, reuse them in future campaigns to deliver fantastic, repeatable results quickly. Once you nail rhythm alignment, you enable faster iteration cycles, capture tighter edits, and deliver more compelling visuals behind every brand story. This approach, often adopted in industry pipelines, helps designers play with speed while preserving clarity, making dynamic transitions a core asset in every video or demo reel.

Live Performance Setup: Controllers, Gestures, and Visual Triggers

Invest in an ultimate controller combo: a compact 8×8 pad matrix, a pressure-sensitive fader, and a touch strip. This setup remains realistic, responds with precise control, making gesture smooth, and yields a crisp look on stage. Map pads to samples, encoders to parameter tweaks, and a jog wheel to navigate scenes, providing fast, reliable actions.

Link motion to graphics through OSC or MIDI routing, using extensive mappings to avoid overload. Color-coded LEDs on pads offer immediate feedback, while a projector stream or halo LEDs can animate sequences. Keep triggers deterministic, precise, and smooth to sustain momentum during a live presentation.

Archive a library of scenes as files with clear naming; these features enable rapid recall across venues. Extensive gesture mappings turn complex manipulation into single actions, transforms energy into a coherent, polished look. Post-production tweaks adjust timing, mix, and automation after capture. Highlight peak moments with a brief gesture.

Within this space, design object-level controls: each button or pad maps to a single scene or graphic object, enabling rapid transitions. Animate transitions by small, subtle pacing; avoid abrupt jumps to keep momentum.

Waitlist access can accompany presets tied to a running show; provide files and instructions for other performers. UI elements reinforce branding; this innovation supports a concise presentation. Within your workflow, this approach transforms audience perception by delivering intuitive, realistic cues.

Export, Integration, and Compatibility with DAWs and Game Engines

Export kit should consist of high‑quality WAV/AIFF samples plus a compact instrument preset and MIDI map. This approach allows longer project iterations, facial capture‑linked articulations, and anime‑inspired textures; you can replace single samples without rebuilding entire instrument. Include metadata for timing, root key, loop points, and cost per asset.

DAWs demand multi‑format assets: sample imports (WAV/AIFF) and sampler mappings (SFZ, Kontakt). Optional plugin builds in VST3, AU, AAX boost compatibility across Ableton Live, FL Studio, Cubase, Logic Pro, Reaper. Provide a clear per‑project path to streamline setup, speeding onboarding for composers and developers.

Game engines accept samples as Audio Clip assets or as embedded modules. Unity, Unreal Engine, and custom builders read WAV/OGG with engine‑specific metadata. For precise alignment with visuals, embed timing cues and connect to facial animation curves; this makes lifelike sound moments easier to achieve. Assets turned into ready‑to‑run scenes speed integration here.

Process milestones: batch export as a complete set, attach per‑project tags, keep naming consistent; this helps developers, composers, and builders manage large libraries.

Operational gains: faster iteration cycles, cost control, and better timing sync. Blog notes highlight milestones here; through a builder approach, three workflows align with complete projects. Supporting a billion asset instances across campaigns keeps quality high while cost stays in check.

Discover the Visual World Synthesizer – Create Immersive Soundscapes" >

Discover the Visual World Synthesizer – Create Immersive Soundscapes" >