Рекомендація: Start with a tightly scoped, AI-assisted workflow that handles asset tagging, reference material synthesis, and rough-cut suggestions; expect 25% to 50% projected reductions in first-pass editing, while metadata richness improves search across archives. youll notice faster feedback cycles and fewer bottlenecks in post.

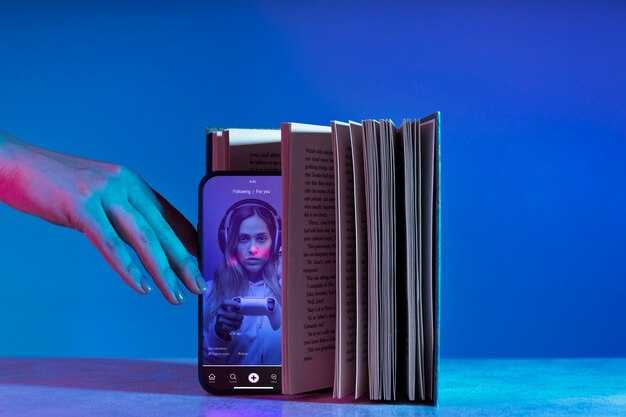

Integrate AI across the main editing interface and invideo-like platforms; this fusion of automation with human craft yields more explainers, tutorials, та анімації that travel across formats. The means to achieve this rests on a crucial interface that surfaces AI-generated cuts and captions with a single click; the result is cost-effectiveness and faster turnaround.

The approach delivers a rich diversity of outputs–from short explainers to longer tutorials–by assembling a reference library of assets, lower-thirds, and color palettes. AI can propose fixed templates and multiple анімації variations, enabling rapid testing of styles while preserving benefits of human storytelling in crafting decisions. youll gain more confidence in creative choices as options multiply.

Across projects, the new workflow yields measurable benefits in speed and quality. The means to scale without linearly expanding headcount include automated captions, transcripts, and scene tagging; this is особливо valuable for international markets, where localization can be heavy. The cost-effectiveness improves as you leverage reusable components, and the fusion of AI with crafting accelerates iteration times, particularly for mission-critical narratives.

For groups seeking actionable steps, start with a tutorial that demonstrates a small toolkit of AI means to automate tagging, transcripts, and rough cuts; document the reference assets and share results across channels. The goal is to illustrate the benefits and avoid pitfalls; focus on a diversity of formats, evaluate performance quarterly, and pilot invideo as a platform to test the fusion of automation with craft. Track metrics like time savings, revision rate, and cost-effectiveness to justify broader adoption, particularly in cross-functional contexts.

Practical steps to align AI with your video production workflow

Adopt a 30-minute AI integration sprint each morning to map capabilities onto core tasks, creating a living catalog of prompts and assets that accelerates iteration, reduces hesitation, and speeding the pace of creative cycles.

Define a modular workflow that starts with briefs in текстовых formats, then AI suggests story notes, shot lists, and rough edits for shorts, keeping a tight loop between description, drafting, and export. This structure resonates with creative crews and aligns output with audience stories.

Introduce automated templates for editing and voiceover, with filters that vet tone, pacing, and factual accuracy; automation handles repetitive tasks like captions from speech-to-text and time-stamped text for editors.

Build a framework that democratizes access to resources: a centralized catalog of shots, B-roll, and audio accessible across crews and locations, enabling rapid composition and cross-pollination of ideas.

Create a feedback loop where editors, writers, and voiceover artists review AI-generated options in real time, increasing interaction and delivering innovative, audience-aligned variations that resonate with brands and audiences.

Keep costs budget-friendly by reallocating existing resources and using AI to accelerate tasks, improving throughput without extra hires; utilize templates, prompts, and automating routines along the pipeline to deliver faster turnarounds and new formats like text-powered shorts.

Acknowledge hesitation with a phased pilot in small crews; measure capability gains and publish results across businesses to accelerate adoption and build confidence.

Track metrics such as time saved, iteration speed, and cost per output; implement filters for quality and compliance, and maintain a living catalog of case studies to inform future cycles along the way, ensuring a measurable impact.

Map your current video workflow and identify AI touchpoints

Start with a five-step map on a single template to capture the route from idea to release. Focus on the most time-consuming handoffs, measure cycle times, and assign ownership for each step. The objective is to illuminate AI touchpoints that turbocharge throughput without compromising quality. Build a lean experience, and document focus areas that клиентов expect and which metrics demonstrate impact.

| Stage | Current touchpoints | AI touchpoints | Impact focus | Нотатки |

|---|---|---|---|---|

| Ideation & Scripting | Brief, references, client inputs, constraints | AI prompts to brainstorm topics, auto outline, draft scripts, hooks detection | 20–40% faster drafting; preserve tone | particular brand voice, которая поддерживает consistency with guidelines |

| Capture & Logging | Shot lists, on-set notes, talent coordination | AI-assisted shot suggestions, scene tagging, auto captions | 15–30% reduction in pre-shoot prep; improved metadata quality | fueled by sensor data; scale tagging across clips |

| Editing & Assembly | Rough cut, color, audio, asset management | Auto rough cut, clip rating, color matching, template-based edits | 25–50% faster turnaround; consistent look | engineer-grade AI, power, scale through templates |

| Review & Approval | Internal review, client feedback, revisions | Auto summary of changes, sentiment analysis, version control | Shorten cycles; clearer change requests | клиентов feedback loop; добавив structured notes for faster iteration |

| Distribution & Performance | Publishing, thumbnails, descriptions, performance tracking | Best-time posting, auto thumbnail hooks, clip-based A/B tests, dashboards | Increase reach; data-driven iteration | expansion across ecosystem; five experiments per project |

Five concrete steps to implement AI touchpoints quickly: 1) map the current flow and quantify cycle times; 2) pilot AI touchpoints on a single project; 3) implement a lightweight template and automation for low-risk steps; 4) deliver a concise lecture to bring teams on board; 5) scale across projects, monitor metrics in a dashboard. добавив heatmap of touchpoints to visualize bottlenecks and a reusable template for weekly reviews. This approach is fueled by a clear ownership model and an engineering mindset, power, expansion across the ecosystem, and it supports любого workflow and клиентов expectations, avoiding fear and showing that augmentation raises outcomes for собственные teams and partners.

Select AI tools for scripting, planning, editing, color, and audio

Begin with a scripting assistant that auto-generates outline, dialogue tweaks, and scene notes; connect it to a planning hub that outputs shot-by-shot breakdowns, batch scripts, and a catalog of assets. This reduces rework and speeds approvals, though it relies on native integrations with the editor and stock libraries. Run trials to quantify time savings and dollars spent, doesnt require a large crew, and then refine the stack accordingly.

- Scripting and planning

- Choose an AI tool that can deliver a breakdown of scenes, varying tone options, and conversion-ready beat sheets; export to storyboard formats used by editors, with a focus on technological integrations that keep workflows seamless.

- Link to a planning module that batches tasks, sets milestones, and maintains a catalog of assets, ensuring smooth between departments and fast handoffs.

- Prioritize outputs that tailor for short-form content; look for editor-friendly templates, license controls, and trials priced in dollars.

- Editing and color

- Select an editor with AI assisted trimming, scene refinement, and resolution management across clips; enable batch application of looks to preserve consistency.

- Invest in native color tools with automatic color matching and LUT generation; ensure plugins integrate without leaving the primary workflow, leveraging technological robustness.

- Build a inspiration catalog that includes stock footage and preset looks; this supports an unprecedented market of rapid iterations, revolutionizing speed though you launched with incremental capability.

- Аудіо

- Adopt AI driven noise reduction, voice isolation, and adaptive EQ to improve intelligibility; coordinate with the editor timeline and automate export to multiple formats, including short-form assets.

- Maintain a stock sound library with a licenses catalog, которая tracks licenses and usage across projects.

- Use trials to compare cost and feature sets; aim to refine the mix and keep dollars spent aligned with project value, especially for short-form and long-form pieces.

Define roles: who approves AI outputs, who handles final edits

Designate one approver for AI outputs before publication. This gatekeeper justifies changes, verifies facts, ensures tone aligns with policy, and records a concise rationale for each decision. A formal log supports traceability across a batch of items and limits drift from the creator’s voice.

The two-track workflow separates oversight from execution: the approver, usually a strategist or compliance lead, reviews generated content for accuracy, source attribution, and alignment with brand strategy; they justify edits and approve the final wording. The final edits lead handles structure, voiceover scripts, captions, and pacing, ensuring the narrative remains coherent across the batch. Behind-the-scenes refinements focus on language, timing, and visuals to keep a consistent voice across items.

Process logistics: keep the approval load manageable to avoid bottlenecks, while maintaining guardrails. The proliferation of AI outputs means a fraction of drafts go through the two-track review. The workflow relies on a collaborative set of skillsets: creator, copywriter, voiceover pro, and legal reviewer. A 64month milestone helps quantify progress in coherence and cycle time; unlimited iterations remain possible through structured feedback, driven by contributions from all roles. The remainder of items in the batch benefits from this structure, with testimonials from stakeholders validating the approach.

Risk management: the implications of unchecked AI content require guardrails, such as source validation, citation standards, and cadence rules. The approver ensures disclosure of AI involvement where needed, and the editor enforces voice consistency with the creator’s intent. The result: more differentiation across outputs, and reduced risk of misalignment behind campaigns; limit the number of unchecked items in any batch to protect relevance.

Measurement and scaling: track metrics like accuracy rate, approval turnaround, and listener sentiment in the voiceover. Use the suggested batch size to maintain pace: 10–20 items per batch; aim for steady growth, with contributions from the two roles creating a coherent, reliable output. The approach empowers creators and support staff; fosters a power-driven culture that becomes more capable at scale; unlimited iterations remain possible through iterative feedback. The testimonials from stakeholders highlight transforming outcomes and strengthening differentiation across content lines.

Set up a repeatable review process with AI-assisted feedback

Implement a modular, AI-assisted review loop that ingests assets, outputs structured feedback, and writes results to postgresql; target a 30–40% shorter review cycle within the first two months.

Create a standard feedback template with fields: reference, timestamp, reviewer_role, impressions, suggested_changes, and a scoring metric. The AI can suggest refinements alongside each item, aligning with desired criteria and practical actions.

For each asset, run iterations: 3 iterations; openai produces 3 variations of feedback; compare against a reference rubric; the results can be indistinguishable from human quality.

Automating the collection of metrics reduces manual toil; retain the ability to tailor feedback at scale: track time-to-feedback, acceptance rate, and changes implemented; store results in postgresql; monitor improvements across iterations.

Establish a weekly clinic to review AI-produced notes; youll see outcomes across groups; pull insights from podcasts to calibrate practices; align with institutions pursuing practical, smart improvements; the result is unprecedented efficiency.

Limit the scope to лишь 3–5 сценарии per asset to keep focus.

Data layer: store feedback, changes, and metrics; use a reference schema; track variations; ensure the schema supports JSON; index on asset_id; postgresql remains central.

Risks and governance: ensure access control, audit trails, and policy-aligned usage of openai; keep data within institutions boundaries; maintain privacy and compliance across platforms; align with broader institutions to maximize impact.

Outcomes: better quality outputs, less rework, and a competitive edge. The modular approach adapts to different workflows and, with repeated iterations, yields impactful changes you can measure via reference metrics.

Track performance with real-time metrics and post-milestone reviews

Begin with a centralized, modular dashboard that aggregates real-time data from every step of asset creation. Build a combined metrics suite designed to spotlight bottlenecks, cost overruns, and cycle-time variance, основe данных, ensuring required value streams stay visible.

Define core KPIs: cycle time, throughput per batch, revision rate, and on-time delivery. look at trend lines, color-coded health indicators, and threshold alerts that surface deviations within seconds.

Post-milestone reviews: within 24 hours, run a structured assessment to compare planned versus actual, capture actions, and refresh guidance for the next cycle. Include демонстрации and a concise topics list to align stakeholders and propagate lessons into expansion plans.

Leverage ai-generated assets to accelerate iteration, tracking dollars spent, earning momentum, and ROI across segments. Maintain a manual override when needed and keep the process transparent. Use a 1-minute pick routine for rapid decisions on asset revisions during reviews.

Design expansion guidance: support собственные workflows, languages, and topics, with a short-form asset repertoire across series formats. Favor a modular, ideal blend of ai-generated and manual inputs to keep iteration fast and cost-efficient. Use fewer steps per asset to scale confidently towards larger audiences.

Scale planning employs a dedicated suite of dashboards to monitor expansion milestones, track earnings and dollars, and validate each series across languages. Focus on topics that perform best, pick top formats, and iterate with fewer cycles to maximize impact towards long-term growth.

Operational discipline: map data ownership, schedule cadence, and governance rules, ensuring guidance remains aligned and traceable. Publish regular updates to keep stakeholders aware of progress and to support ongoing expansion efforts.

AI Won’t Replace Your Video Team, But It Will Make Them More Productive" >

AI Won’t Replace Your Video Team, But It Will Make Them More Productive" >